Date Values

I wanted to take a look at the date values that had made their way into the DPLA dataset from the various Hubs. The first thing that I was curious about was how many unique date strings are present in the dataset, it turns out that there are 280,592 unique date strings.

Here are the top ten date strings, their instance and then if the string is a valid EDTF string.

| Date Value | Instances | Valid EDTF |

| [Date Unavailable] | 183,825 | FALSE |

| 1939-1939 | 125,792 | FALSE |

| 1960-1990 | 73,696 | FALSE |

| 1900 | 28,645 | TRUE |

| 1935 – 1945 | 27,143 | FALSE |

| 1909 | 26,172 | TRUE |

| 1910 | 26,106 | TRUE |

| 1907 | 25,321 | TRUE |

| 1901 | 25,084 | TRUE |

| 1913 | 24,966 | TRUE |

It looks like “[Date Unavailable]” is a value used by the New York Public Library in denoting that an item does not have an available date. It should be noted that NYPL also has 377,664 items in the DPLA that have no date value present at all, so this isn’t a default behavior for items without a date. Most likely it is practice within a single division that denotes unknown or missing dates this way. The value “1939-1939” is used heavily by the University of Southern California. Libraries and seems to come from a single set of WPA Census Cards in their collection. The value “1960-1990” is used primarily for the items in the J. Paul Getty Trust.

Date Length

I was also curious as to the length of the dates in the dataset. I was sure that I would find large numbers of date strings that were four digits in length (1923), ten digits in length (1923-03-04) and other lengths for common highly used date formats. I also figured that there would be instances of dates that were either less than four digits and also longer than one would expect for a date string. Here are some example date strings for both.

Top ten date strings shorter than four characters

| Date Value | Instances |

| * | 968 |

| 昭和3 | 521 |

| 昭和2 | 447 |

| 昭和4 | 439 |

| 昭和5 | 391 |

| 昭和9 | 388 |

| 昭和6 | 382 |

| 昭和7 | 366 |

| 大正4 | 323 |

| 昭和8 | 322 |

I’m not sure what “*” means for a date value, but the other values seem to be Japanese versions of four digit dates (this is what google translate tells me). There are 14,402 records that have date strings shorter than three characters and a total of 522 unique date strings present.

Top ten date strings longer than fifty characters.

| Date Value | Instances |

| Miniature repainted: 12th century AH/AD 18th (Safavid) | 35 |

| Some repainting: 13th century AH/AD 19th century (Safavid | 25 |

| 11th century AH/AD 17th century-13th century AH/AD 19th century (Safavid (?)) | 15 |

| 1927, 1928, 1929, 1930, 1931, 1932, 1933, 1934, 1935, 1936, 1937, 1938, 1939 | 13 |

| 10th century AH/AD 16th century-12th century AH/AD 18th century (Ottoman) | 10 |

| late 11th century AH/AD 17th century-early 12th century AH/AD 18th century (Ottoman) | 8 |

| 5th century AH/AD 11th century-6th century AH/AD 12th century (Abbasid) | 7 |

| 4th quarter 8th century AH/AD 14th century (Mamluk) | 5 |

| L’an III de la République française … [1794-1795] | 5 |

| Began with 1st rept. (112th Congress, 1st session, published June 24, 2011) | 3 |

There are 1,033 items with 894 unique values that are over fifty characters in length. The longest is a “date string” 193 characters, with a value of “chez W. Innys, J. Brotherton, R. Ware, W. Meadows, T. Meighan, J. & P. Knapton, J. Brindley, J. Clarke, S. Birt, D. Browne, T. Dongman, J. Shuckburgh, C. Hitch, J. Hodges, S. Austen, A. Millar,” which appears to be a mis-placement of another field’s data.

Here is the distribution of these items with date strings with fifty characters in length or more.

| Hub Name | Items with Date Strings 50 Characters or Longer |

| United States Government Printing Office (GPO) | 683 |

| HathiTrust | 172 |

| ARTstor | 112 |

| Mountain West Digital Library | 31 |

| Smithsonian Institution | 25 |

| University of Illinois at Urbana-Champaign | 3 |

| J. Paul Getty Trust | 2 |

| Missouri Hub | 2 |

| North Carolina Digital Heritage Center | 2 |

| Internet Archive | 1 |

It seems that a large portion of these 50+ character date strings are present in the Government Printing Office records.

Date Patterns

Another way of looking at dates that I experimented with for this project was to convert a date string into what I’m calling a “date pattern”. For this I take an input string, say “1940-03-22” and that would get mapped to 0000-00-00. I convert all digits to zero, all letters to the letter a and leave all characters that are not alpha-numeric.

Below is the function that I use for this.

def get_date_pattern(date_string):

pattern = []

if date_string is None:

return None

for c in date_string:

if c.isalpha():

pattern.append("a")

elif c.isdigit():

pattern.append("0")

else:

pattern.append(c)

return "".join(pattern)

By applying this function to all of the date strings in the dataset I’m able to take a look at what overall date patterns (and also features) are being used throughout the dataset, and ignore the specific values.

There are a total of 74 different date patterns for date strings that are valid EDTF. For those date strings that are not valid date strings, there are a total of 13,643 date strings. I’ve pulled the top ten date patterns for both valid EDTF and not valid EDTF date strings and presented them below.

Valid EDTF Date Patterns

| Valid EDTF Date Pattern | Instances | Example |

| 0000 | 2,114,166 | 2004 |

| 0000-00-00 | 1,062,935 | 2004-10-23 |

| 0000-00 | 107,560 | 2004-10 |

| 0000/0000 | 55,965 | 2004/2010 |

| 0000? | 13,727 | 2004? |

| [0000-00-00..0000-00-00] | 4,434 | [2000-02-03..2001-03-04] |

| 0000-00/0000-00 | 4,181 | 2004-10/2004-12 |

| 0000~ | 3,794 | 2003~ |

| 0000-00-00/0000-00-00 | 3,666 | 2003-04-03/2003-04-05 |

| [0000..0000] | 3,009 | [1922..2000] |

You can see that the basic date formats yyyy, yyyy-mm-dd, and yyyy-mm very popular in the dataset. Following that intervals are used in the format of yyyy/yyyy and uncertain dates with yyyy?.

Non-Valid EDTF Date Patterns

| Non-Valid EDTF Date Pattern | Instances | Example |

| 0000-0000 | 1,117,718 | 2005-2006 |

| 00/00/0000 | 486,485 | 03/04/2006 |

| [0000] | 196,968 | [2006] |

| [aaaa aaaaaaaaaaa] | 183,825 | [Date Unavailable] |

| 00 aaa 0000 | 143,423 | 22 Jan 2006 |

| 0000 – 0000 | 134,408 | 2000 – 2005 |

| 0000-aaa-00 | 116,026 | 2003-Dec-23 |

| 0 aaa 0000 | 62,950 | 3 Jan 2000 |

| 0000] | 58,459 | 1933] |

| aaa 0000 | 43,676 | Jan 2000 |

Many of the date strings that are represented by these dates have the possibility of being “cleaned up” by simple transforms if that was of interest. I would imagine that converting the 0000-0000 to 0000/0000 would be a fairly lossless transform that would suddenly change over a million items so that they are valid EDTF. Converting the format 00/00/0000 to 0000-00-00 is also a straight-forward transform if you know if 00-00 is mm-dd (US) or dd-mm (non-US). Removing the brackets around four digit years [0000] seems to be another easy fix to convert a large number of dates. Of the top ten non-valid EDTF Date Patterns, it might be possible to convert nine of them with simple transformations to become valid EDTF date strings. This would give the DPLA 2,360,113 additional dates that are valid EDTF date strings. The values for the date pattern [aaaa aaaaaaaaaaa] with a date string value of [Date Unavailable] might benefit from being removed from the dataset altogether in order to reduce some of the noise in the field.

Common Patterns Per Hub

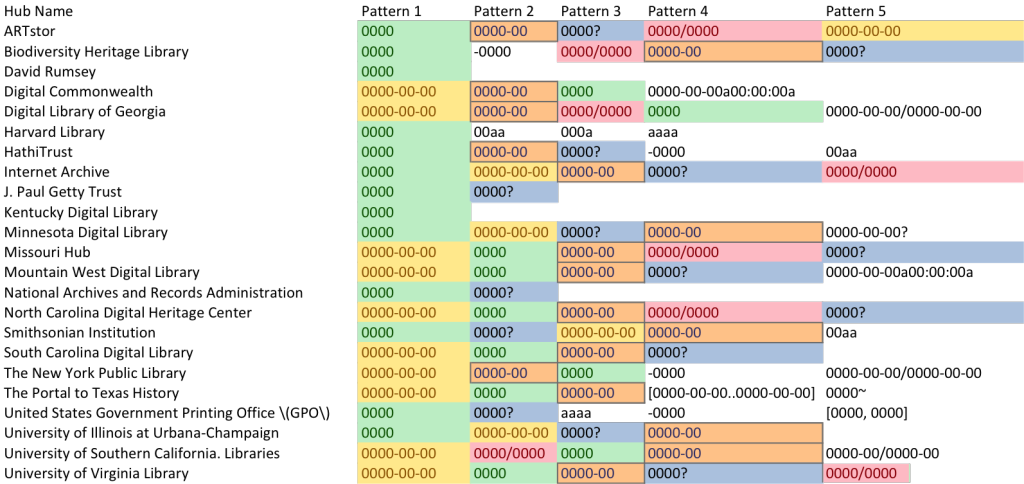

One last thing that I wanted to do was to see i there are any commonalities between the hubs when you look at their most frequently used date patterns. Below I’ve created tables for both valid EDTF date patterns and non-valid EDTF date patterns.

Valid EDTF Patterns

| Hub Name | Pattern 1 | Pattern 2 | Pattern 3 | Pattern 4 | Pattern 5 |

| ARTstor | 0000 | 0000-00 | 0000? | 0000/0000 | 0000-00-00 |

| Biodiversity Heritage Library | 0000 | -0000 | 0000/0000 | 0000-00 | 0000? |

| David Rumsey | 0000 | ||||

| Digital Commonwealth | 0000-00-00 | 0000-00 | 0000 | 0000-00-00a00:00:00a | |

| Digital Library of Georgia | 0000-00-00 | 0000-00 | 0000/0000 | 0000 | 0000-00-00/0000-00-00 |

| Harvard Library | 0000 | 00aa | 000a | aaaa | |

| HathiTrust | 0000 | 0000-00 | 0000? | -0000 | 00aa |

| Internet Archive | 0000 | 0000-00-00 | 0000-00 | 0000? | 0000/0000 |

| J. Paul Getty Trust | 0000 | 0000? | |||

| Kentucky Digital Library | 0000 | ||||

| Minnesota Digital Library | 0000 | 0000-00-00 | 0000? | 0000-00 | 0000-00-00? |

| Missouri Hub | 0000-00-00 | 0000 | 0000-00 | 0000/0000 | 0000? |

| Mountain West Digital Library | 0000-00-00 | 0000 | 0000-00 | 0000? | 0000-00-00a00:00:00a |

| National Archives and Records Administration | 0000 | 0000? | |||

| North Carolina Digital Heritage Center | 0000-00-00 | 0000 | 0000-00 | 0000/0000 | 0000? |

| Smithsonian Institution | 0000 | 0000? | 0000-00-00 | 0000-00 | 00aa |

| South Carolina Digital Library | 0000-00-00 | 0000 | 0000-00 | 0000? | |

| The New York Public Library | 0000-00-00 | 0000-00 | 0000 | -0000 | 0000-00-00/0000-00-00 |

| The Portal to Texas History | 0000-00-00 | 0000 | 0000-00 | [0000-00-00..0000-00-00] | 0000~ |

| United States Government Printing Office (GPO) | 0000 | 0000? | aaaa | -0000 | [0000, 0000] |

| University of Illinois at Urbana-Champaign | 0000 | 0000-00-00 | 0000? | 0000-00 | |

| University of Southern California. Libraries | 0000-00-00 | 0000/0000 | 0000 | 0000-00 | 0000-00/0000-00 |

| University of Virginia Library | 0000-00-00 | 0000 | 0000-00 | 0000? | 0000?-00 |

I tried to color code the five most common EDTF date patterns from above in the following image.

I’m not sure if that makes it clear or not where the common date patterns fall or not.

Non Valid EDTF Patterns

| Hub Name | Pattern 1 | Pattern 2 | Pattern 3 | Pattern 4 | Pattern 5 |

| ARTstor | 0000-0000 | aa. 0000 | aaaaaaa | 0000a | aa. 0000-0000 |

| Biodiversity Heritage Library | 0000-0000 | 0000 – 0000 | 0000- | 0000-00 | [0000-0000] |

| David Rumsey | |||||

| Digital Commonwealth | 0000-0000 | aaaaaaa | 0000-00-00-0000-00-00 | 0000-00-0000-00 | 0000-0-00 |

| Digital Library of Georgia | 0000-0000 | 0000-00-00 | 0000-00- 00 | aaaaa 0000 | 0000a |

| Harvard Library | 0000a-0000a | a. 0000 | 0000a | 0000-0000 | 0000 – a. 0000 |

| HathiTrust | [0000] | 0000-0000 | 0000] | [a0000] | a0000 |

| Internet Archive | 0000-0000 | 0000-00 | 0000- | [0—] | [0000] |

| J. Paul Getty Trust | 0000-0000 | a. 0000-0000 | a. 0000 | [000-] | [aa. 0000] |

| Kentucky Digital Library | |||||

| Minnesota Digital Library | 0000 – 0000 | 0000-00 – 0000-00 | 0000-0000 | 0000-00-00 – 0000-00-00 | 0000 – 0000? |

| Missouri Hub | a0000 | 0000-00-00 | aaaaaaaa 00, 0000 | aaaaaaa 00, 0000 | aaaaaaaa 0, 0000 |

| Mountain West Digital Library | 0000-0000 | aa. 0000-0000 | aa. 0000 | 0000? – 0000? | 0000 aa |

| National Archives and Records Administration | 00/00/0000 | 00/0000 | a’aa. 0000′-a’aa. 0000′ | a’00/0000′-a’00/0000′ | a’00/00/0000′-a’00/00/0000′ |

| North Carolina Digital Heritage Center | 0000-0000 | 00000000 | 00000000-00000000 | aa. 0000-0000 | aa. 0000 |

| Smithsonian Institution | 0000-0000 | 00 aaa 0000 | 0000-aaa-00 | 0 aaa 0000 | aaa 0000 |

| South Carolina Digital Library | 0000-0000 | 0000 – 0000 | 0000- | 0000-00-00 | 0000-0-00 |

| The New York Public Library | 0000-0000 | [aaaa aaaaaaaaaaa] | 0000 – 0000 | 0000-00-00 – 0000-00-00 | 0000- |

| The Portal to Texas History | a. 0000 | [0000] | 0000 – 0000 | [aaaaaaa 0000 aaa 0000] | a.0000 – 0000 |

| United States Government Printing Office (GPO) | [0000] | 0000-0000 | [0000?] | aaaaa aaaa 0000 | 00aa-0000 |

| University of Illinois at Urbana-Champaign | 0-00-00 | a. 0000 | 00/00/00 | 0-0-00 | 00-00-00 |

| University of Southern California. Libraries | 0000-0000 | aaaaa 0000/0000 | aaaaa 0000-00-00/0000-00-00 | 0000a | aaaaa 0000-0000 |

| University of Virginia Library | aaaaaaa aaaa | a0000 | aaaaaaa 0000 aaa 0000? | aaaaaaa 0000 aaa 0000 | 00–? |

With the non-valid EDTF Date Patterns you can see where some of the date patterns are much more common across the various Hubs than others.

I hope you have found these posts interesting. If you’ve worked with metadata, especially aggregated metadata you will no doubt recognize much of this from your datasets, if you are new to this area or haven’t really worked with the wide range of date values that you can come in contact with in large metadata collections, have no fear, it is getting better. The EDTF is a very good specification for cultural heritage institutions to adopt for their digital collections. It helps to provide both a machine and human readable format for encoding and notating the complex dates we have to work with in our field.

If there is another field that you would like me to take a look at in the DPLA dataset, please let me know.

As always feel free to contact me via Twitter if you have questions or comments.